Modern solutions, especially those built in the cloud using microservice patterns, will be made from many components. Although these provide excellent power and flexibility, they also offer numerous attack points.

Therefore, when considering our security controls, we need to consider multiple layers of defense. Always assume that your primary measures will fail, and ensure you have backup controls in place as well.

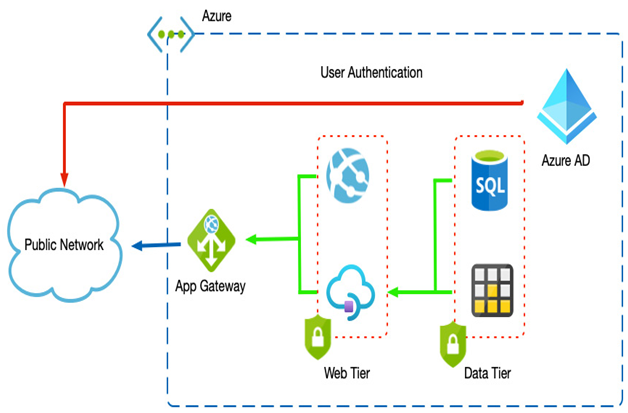

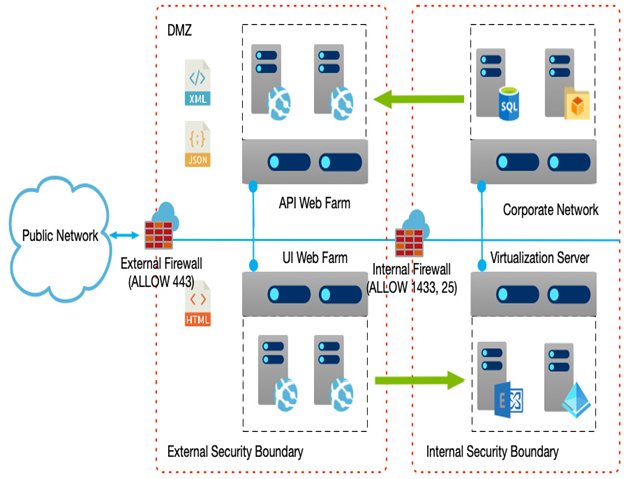

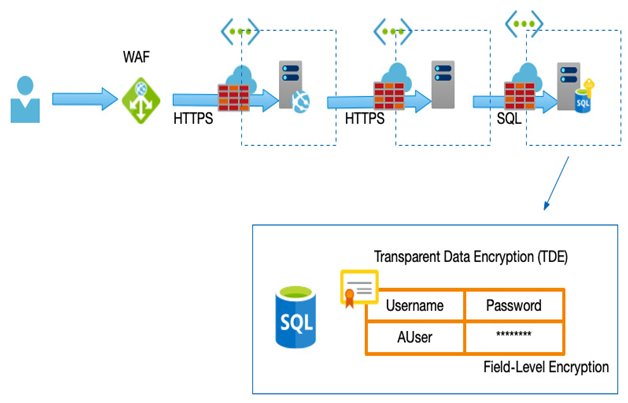

Known as Defense-In-Depth (DID), an example would be data protection in a database that serves an e-commerce website. Enforcing an authentication mechanism on a database might be your primary control, but you need to consider how to protect your application if those credentials are compromised. An example of a multilayer implementation might include (but not be limited to) the following:

- Network segregation between the database and web app

- Firewalls to only allow access from the web app

- TDE on the database

- Field-level encryption on sensitive data (for example, credit card numbers; passwords)

- A WAF

The following diagram shows an example of a multilayer implementation:

Figure 2.1 – Multiple-layer protection example

We have covered many different technical layers that we can use to protect our services, but it is equally important to consider the human element, as this is often the first point of entry for hacks.

User education

Many attacks originate from either a phishing/email attack or social data harvesting.

With this and an excellent technical defense in mind, a solid education plan is also an invaluable way to prevent attacks at the beginning.

Training users in acceptable online practices that help prevent them from leaking important information, and therefore any plan, should include the following:

- Social media data harvesting: Social media platforms are a gold mine for hackers; users rarely consider the information they routinely supply could be used to access password-protected systems. Birth dates, geographical locations, even pet names and relationship information are routinely supplied and advertised—all of which are often used as security questions when confirming your identity through security questions, and so on.

Some platforms present quizzes and games that again ask questions that answer common security challenges.

- Phishing emails: A typical exploit is to send an email stating that an account has been suspended or a new payment has been made. A link will direct the user to a fake website that looks identical to an official site, so they enter their login details, which are then logged by the hacker. These details can not only be used to access the targeted site in question but can also obtain additional information such as an address, contact information, and, as stated previously, answers to common security questions.

- Password policies: Many people reuse the same password. If one system is successfully hacked, that same password can then be used across other sites. Educating users in password managers or the dangers of password reuse can protect your platform against such exploits.

This section has looked at the importance of design security throughout our solutions, from understanding how and why we may be attacked, to common defenses across different layers. Perhaps the critical point is that good design should include multiple layers of protection across your applications.

Next, we will look at how we can protect our systems against failure—this could be hardware, software, or network failure, or even an attack.