Older IT systems were monolithic. The first business systems consisted of a large mainframe computer that users interacted with through dumb terminals—simple screens and keyboards with no processing power of their own.

The software that ran on them was often built similarly—they were often unwieldy chunks of code. There is a particularly famous photograph of the National Aeronautics and Space Administration (NASA) computer scientist Margaret Hamilton standing by a stack of printed code that is as tall as she is—this was the code that ran the Apollo Guidance Computer (AGC).

In these systems, the biggest concern was computing resources, and therefore architecture was about managing these resources efficiently. Security was primarily performed by a single user database contained within this monolithic system. While the internet did exist in a primitive way, external communications and, therefore, the security around them didn’t come into play. In other words, as the entire solution was essentially one big computer, there was a natural security boundary.

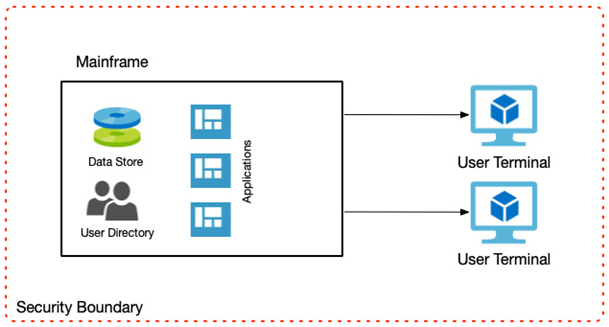

If we examine the following diagram, we can see that in many ways, the role of an architect dealt with fewer moving parts than today, and many of today’s complexities, such as security, didn’t exist because so much was intrinsic to the mainframe itself:

Figure 1.1 – Mainframe computing

Mainframe computing slowly gave way to personal computing, so next, we will look at how the PC revolution changed systems, and therefore design requirements.

Personal computing

The PC era brought about a business computing model in which you had lower-powered servers that delivered one or two duties—for example, a file server, a print server, or an internal email server.

PCs now connected to these servers over a local network and performed much of the processing themselves.

Early on, each of these servers might have had a user database to control access. However, this was very quickly addressed. The notion of a directory server quickly became the norm so that now we still have a single user database, as in the days of the mainframe; however, the information in that database must control access to services running on other servers.

Security had now become more complex as the resources were distributed, but there was still a naturally secure boundary—that of the local network.

Software also started to become more modular in that individual programs were written to run on single servers that performed discrete tasks; however, these servers and programs might have needed to communicate with each other.

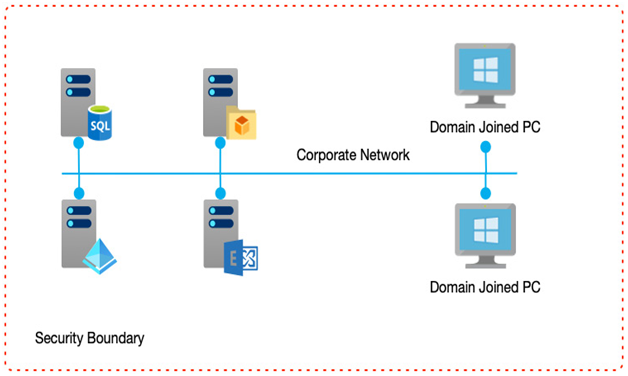

The following diagram shows a typical server-based system whereby individual servers provide discrete services, but all within a corporate network:

Figure 1.2 – The personal computing era

Decentralizing applications into individual components running on their own servers enabled a new type of software architecture to emerge—that of N-tier architecture. N-tier architecture is a paradigm whereby the first tier would be the user interface, and the second tier the database. Each was run on a separate server and was responsible for providing those specific services.

As systems developed, additional tiers were added—for example, in a three-tier application the database moved to the third tier, and the middle tier encapsulated business logic—for example, performing calculations, or providing a façade over the database layer, which in turn made the software easier to update and expand.

As PCs effectively brought about a divergence in hardware and software design, so too did the role of an architect also split. It now became more common to see architects who specialized in hardware and networking, with responsibilities for communication protocols and role-based security, and software architects who were more concerned with development patterns, data models, and user interfaces.

The lower-cost entry for PCs also vastly expanded their use; now, smaller businesses could start to leverage technologies. Greater adoption led to greater innovation—and one such advancement was to make more efficient use of hardware—through the use of virtualization.